The Core Mental Model: AI as a Mirror

Understanding what AI actually does — and why that changes everything

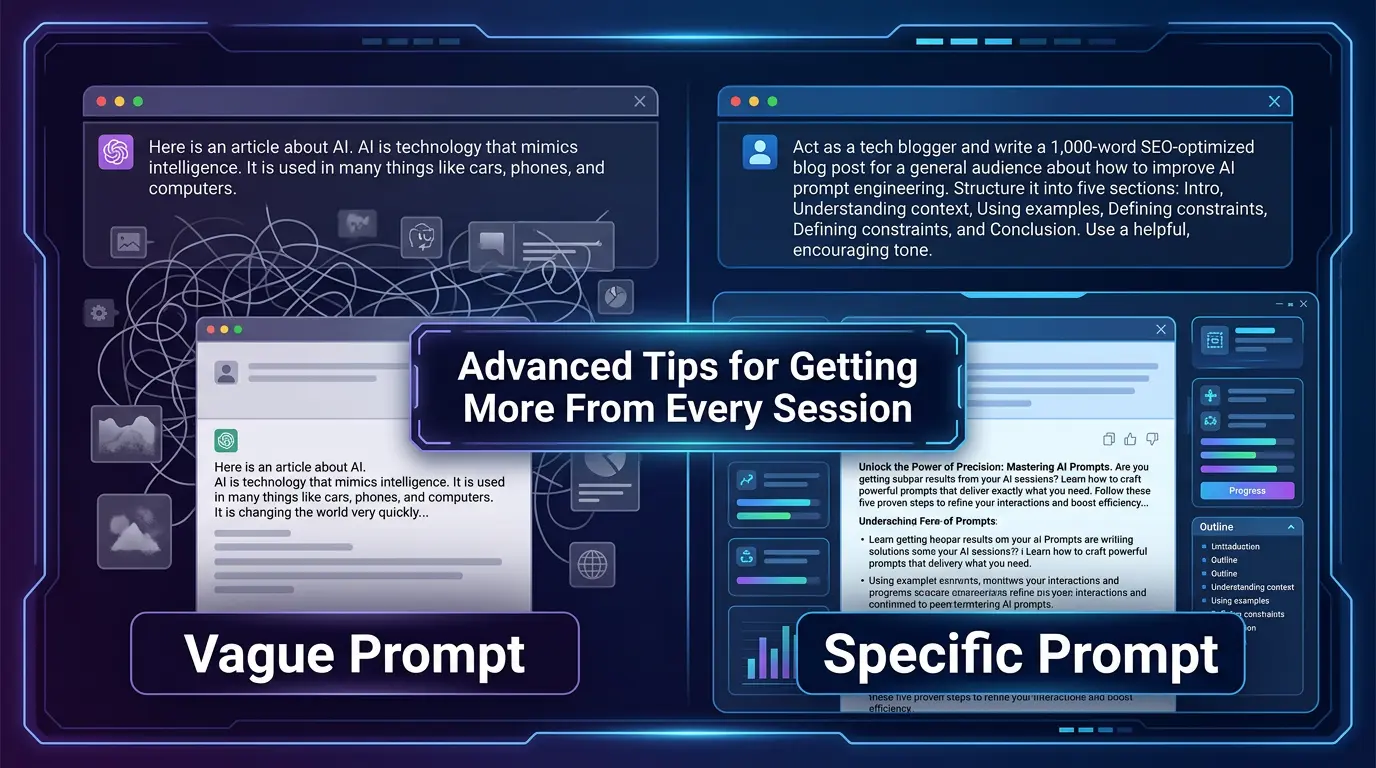

The most useful way to think about AI is as a very fast, very well-read mirror. It reflects back the quality of your thinking. A muddled prompt produces a muddled response. A clear, specific, well-structured prompt produces clear, specific, well-structured output. This isn’t metaphorical — it’s mechanically true.

When you ask “what should I write about?” you’re giving the AI almost no signal. When you ask “I run a freelance UX consulting practice targeting early-stage SaaS startups — what are three blog post angles that would attract founders who are about to hire their first designer?”, you’ve given it a rich signal. The output will reflect that difference immediately.

Before writing a prompt, ask yourself: “If someone handed me exactly this input with zero additional context, what would I produce?” If the honest answer is “something generic,” you need more context in your prompt.

Write your prompt in a notepad first. Read it back as if you’re someone who knows nothing about your situation. Fill the gaps you notice. Then paste it. This adds 90 seconds and often doubles output quality.

💡 Key Insight

The quality ceiling of your AI output is set by the quality of your input. There’s no technique that reliably compensates for a vague or under-specified prompt. Invest in the setup, not just the ask.