Why Most Prompt Libraries Fail at Scale

The structural problems that show up between team sizes 5 and 50

The failure mode is almost always the same regardless of industry or team size. Someone enthusiastic about AI creates a shared folder — Google Drive, Notion, Confluence, it doesn’t matter — dumps prompts into it, and calls it a library. For the first three months, it gets light usage. By month six, it’s a graveyard with no clear owner, no consistent format, and no way to know which prompts are tested versus experimental.

The structural reasons this happens are predictable:

Each contributor saves prompts in their own format. Some include context, some don’t. Some use variable placeholders, some hardcode specifics. Within weeks the library becomes unreadable to anyone who didn’t write a given entry.

There’s no way to distinguish a prompt tested across 200 outputs from one someone wrote in five minutes and never used again. Every entry looks equally authoritative — so users lose confidence and stop relying on the library.

The library grows but becomes harder to navigate. People stop searching and start writing prompts from scratch — duplicating work and fragmenting institutional knowledge across personal files and notes apps.

Models evolve. Business requirements change. A prompt for summarizing legal documents written in 2024 may need adjustment by 2026. Without an update process, the library drifts further from reality over time.

💡 Core Insight

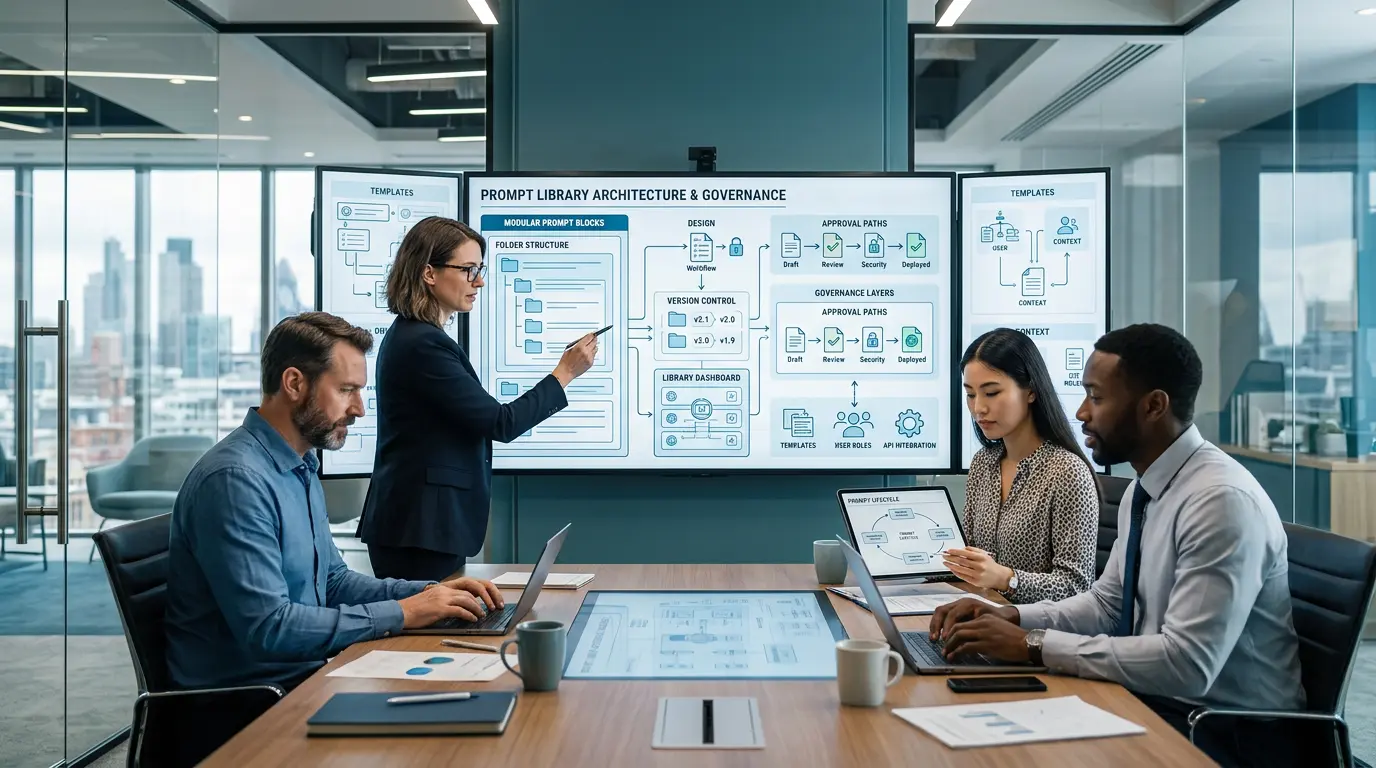

The teams with the most effective prompt libraries treat them like internal software products — with a product owner, defined standards, versioning, and regular reviews. The tooling is secondary. The discipline is what matters.